Artificial intelligence and machine learning can help users sift through terabytes of data to arrive at a conclusion driven by relevant information, prior results, and statistics. But how much should we trust those conclusions—especially given AI’s tendency to hallucinate, or simply make things up?

Consider the use of an AI health care system that predicts a patient’s medical diagnosis or risk of disease. A doctor’s choice to trust that AI’s advice can have a major impact on the patient’s health outcomes; in such high-stakes scenarios, the cost of a hallucination could be someone’s life.

AI predictions can vary in trustworthiness, for any number of reasons: maybe the data are bad to begin with, or the system hasn’t seen enough examples of the current situation it’s facing to have sufficiently learned what to do in that scenario. Additionally, most of the modern deep neural networks behind AI predict singular outcomes—that is, whichever has the “highest score” based on a particular model. In the context of health care, this would mean the aforementioned AI system would return only one probable disease diagnosis out of the many possibilities. But just how certain is the AI that it’s got the correct answer?

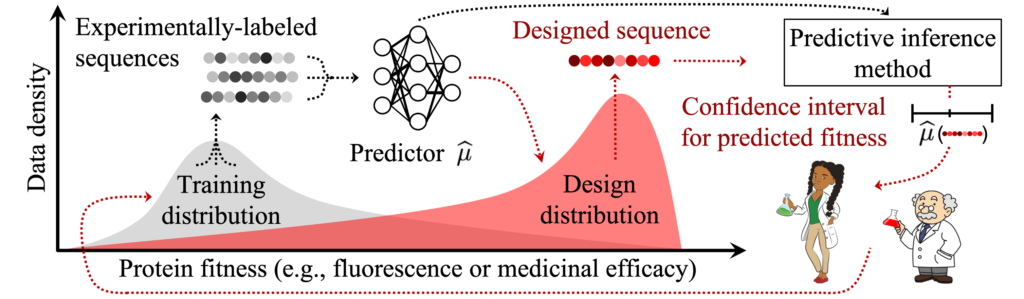

Uncertainty quantification methods attempt to discover just that, generally by prompting ML models to return a set of possible outcomes—the larger the predicted set, the more uncertain that model would be in its prediction. However two key challenges still limit the real-world use of UQ in AI and ML. The first is caused by discrepancies between the data a model is trained on versus the data it sees at deployment. The second is that many UQ approaches require either intensive computing power or an impractical amount or quality of data that may simply be unavailable in real-world scenarios.

But a group of Hopkins researchers—Suchi Saria, the John C. Malone Associate Professor of Computer Science and founding research director of the Malone Center for Engineering in Healthcare; Anqi “Angie” Liu, an assistant professor of computer science; and Drew Prinster, a PhD candidate in the Department of Computer Science—recently presented a novel collection of UQ methods for complex AI and ML models. Called “JAWS-X,” their proposed methods promise to flexibly balance computational and data-use efficiency for practical UQ deployment.

“This is especially important when data are scarce or costly to collect, such as in health care, where patient privacy considerations rightfully limit data use, or in biomolecular design, where data collection requires expensive and time-consuming experimental procedures,” Prinster explains.

Illustration of a biomolecular design data shift scenario.

Building on prior work published at the 2022 Conference on Neural Information Processing Systems, the team says they have generalized previous UQ methods to allow for real-world data shifts while also striking a flexible, favorable balance between computing requirements and data-use efficiency.

A technique called “importance weighting” allows the JAWS-X UQ methods to adapt their uncertainty estimates in response to data shifts, the team explains. In the example of an AI health care system encountering different patient demographics than what it was trained on, the JAWS-X methods account for this shift by emphasizing the information from the minority-group patients they have seen before in the training data and de-emphasizing information from the majority group in the training data.

And where previous methods offered a “pick-your-poison” choice between either prohibitive computational costs or inefficient—and potentially harmful—data use, the team claims their JAWS-X methods avoid this false dichotomy by allowing users to flexibly adapt resource requirements needed for their specific UQ problem. In other words, depending on the computing power available to researchers, they can choose a JAWS-X method to match so that they achieve a balance between model performance and fast computation.

The Hopkins computer scientists report experimental results demonstrating that their JAWS-X methods lead to substantial improvements as compared to state-of-the-art work in the efficiency and informativeness of AI and ML UQ in a fluorescent protein design use case.

“This is an important step toward a framework for building appropriate trust dynamics in human-AI teams,” says Prinster.

Their paper was not only accepted to the 2023 International Conference on Machine Learning in Honolulu, Hawaii, but was additionally selected for an oral presentation at the prestigious conference; this designation is awarded to only the top 2 percent of conference submissions.